#Jabil

Category Archives: CosmosDB

Jabil pilots Azure and Project Brainwave in advanced manufacturing solutions

Jabil provides advanced manufacturing solutions that require visual inspection of components on production lines. Their pilot with Azure Machine Learning and Project Brainwave promises dramatic improvements in speed and accuracy, reducing workload and improving focus for human operators.

Proud to be the lead architect working on advanced Machine Learning solutions and pipelines at Jabil.

Our machine learning development project makes it into //BUILD 2018 Keynote

Sometimes working on advanced technologies comes with the peril of NDAs … which limit what I can talk about… but it is nice to see yet another of our projects feature in Keynote speech by Satya Nadella, this time at Microsoft //BUILD 2018. Proud to be the lead architect working on advanced Machine Learning solutions and pipelines at Jabil.

Our solution included in Microsoft Ignite 2017 Keynote

Azure Cosmos DB, Azure DW, Machine Leaning, Deep Learning, Neural Networks, TensorFlow, SQL Server, ASP.NET Core… are just a few of the components that make up one of the solutions we are currently developing.

Have been under a social media embargo, until today, but now that the Microsoft Ignite 2017 keynote has taken place, I am able to proudly say that the solution our team has been working on for some time was part of the Keynote addresses.

During the second keynote lead by Scott Guthrie, Danielle Dean a Data Scientist Lead @Microsoft discussed at a high level, one of the solutions we are developing at Jabil, which involves advanced image recognition of circuit board issues. The keynote focused in on the context of the solutions data science portion and introduced the new Azure Machine Learning Workbench to the packed audience.

Tomorrow morning there is a session – “Using big data, the cloud, and AI to enable intelligence at scale” (Tuesday, September 26, from 9:00 AM to 10:15 AM, in Hyatt Regency Windermere X)… during which we will be going into a bit more detail, and the guys at Microsoft will be expanding on the new AI and Big Data machine learning capabilities (session details via this link).

Awaiting Microsoft Ignite Keynote

Some cool stuff ahead today… keynote coming up…

Cosmos DB Change Feed Processor NuGet package now available & Working with the change feed support

Cosmos DB Change Feed Processor NuGet package now available

Many database systems have features allowing change data capture or mirroring, for use with live backups, reporting, data warehousing and real time analytics for transactional systems… Azure Cosmos DB has such a feature called the Change Feed API, which was first introduced in May 2017.

The Change Feed API provides a list of new and updated documents in a partition in the order in which the updates were made.

Microsoft has just recently introduced the new Change Feed Processor Library which abstracts the existing Change Feed API to facilitate the distribution of change feed event processing across multiple consumers.

The Change Feed Processor library provides a thread-safe, multiple-process, runtime environment with checkpoint and partition lease management for change feed operations.

The Change Feed Processor Library is available as a NuGet package for .NET development. The library makes actions like these easier to read changes from a change feed across multiple partitions and performing computational actions triggered by the change feed in parallel (aka Complex Event Processing).

Judy Shen from the Microsoft Cosmos DB team has published some sample code on GitHub, demonstrating it’s use.

Working with the change feed support in Azure Cosmos DB

Aravind Ramachandran, Mimi Gentz and Judy Shen also just published an article Working with the change feed support in Azure Cosmos DB on the Azure docs site a few days ago…

2017-7-24

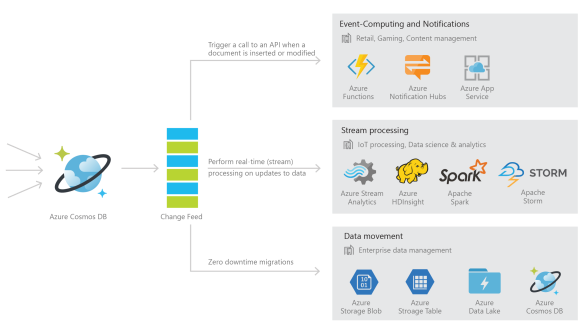

Azure Cosmos DB is a fast and flexible globally replicated database service that is used for storing high-volume transactional and operational data with predictable single-digit millisecond latency for reads and writes. This makes it well-suited for IoT, gaming, retail, and operational logging applications. A common design pattern in these applications is to track changes made to Azure Cosmos DB data, and update materialized views, perform real-time analytics, archive data to cold storage, and trigger notifications on certain events based on these changes. The change feed support in Azure Cosmos DB enables you to build efficient and scalable solutions for each of these patterns.

With change feed support, Azure Cosmos DB provides a sorted list of documents within an Azure Cosmos DB collection in the order in which they were modified. This feed can be used to listen for modifications to data within the collection and perform actions such as:

- Trigger a call to an API when a document is inserted or modified

- Perform real-time (stream) processing on updates

- Synchronize data with a cache, search engine, or data warehouse

Changes in Azure Cosmos DB are persisted and can be processed asynchronously, and distributed across one or more consumers for parallel processing. Let’s look at the APIs for change feed and how you can use them to build scalable real-time applications. This article shows how to work with Azure Cosmos DB change feed and the DocumentDB API.

Note

Change feed support is only provided for the DocumentDB API at this time; the Graph API and Table API are not currently supported.Use cases and scenarios

Change feed allows for efficient processing of large datasets with a high volume of writes, and offers an alternative to querying entire datasets to identify what has changed. For example, you can perform the following tasks efficiently:

- Update a cache, search index, or a data warehouse with data stored in Azure Cosmos DB.

- Implement application-level data tiering and archival, that is, store “hot data” in Azure Cosmos DB, and age out “cold data” to Azure Blob Storage or Azure Data Lake Store.

- Implement batch analytics on data using Apache Hadoop.

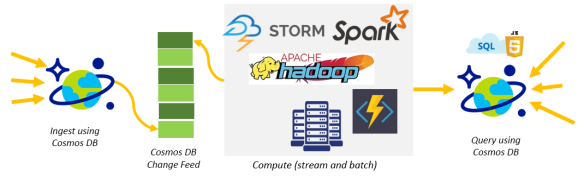

- Implement lambda pipelines on Azure with Azure Cosmos DB. Azure Cosmos DB provides a scalable database solution that can handle both ingestion and query, and implement lambda architectures with low TCO.

- Perform zero down-time migrations to another Azure Cosmos DB account with a different partitioning scheme.

Lambda Pipelines with Azure Cosmos DB for ingestion and query

You can use Azure Cosmos DB to receive and store event data from devices, sensors, infrastructure, and applications, and process these events in real-time with Azure Stream Analytics, Apache Storm, or Apache Spark.

Within web and mobile apps, you can track events such as changes to your customer’s profile, preferences, or location to trigger certain actions like sending push notifications to their devices using Azure Functions or App Services. If you’re using Azure Cosmos DB to build a game, you can, for example, use change feed to implement real-time leaderboards based on scores from completed games.

…

Read more at https://docs.microsoft.com/en-gb/azure/cosmos-db/change-feed

–

MEAN.js with Cosmos DB on Azure

(a YouTube series by John Papa)

Cosmos DB is of significant interest to myself for projects I have been engaged in for the past couple of years which use MongoDB and MEAN in several ways. Scaling for us has always been a bit of a pain with MongoDB, and Cosmos DB on Azure looks to be relieving a lot of the headaches we have had.

MEAN stands for MongoDB, Express, Angular and Node.

I am not the author of these – this is a reference list to a YouTube series by John Papa introducing MEAN with Cosmos DB on Azure. I would normally just link directly to the creators blog or post for a series such as this, but it seems to be offline just now so I thought I would share a full list of current videos here – hopefully the original link will work again soon – which is https://johnpapa.net/angular-cosmosdb-1/.

MEAN.js with Cosmos DB – Part 1: Introduction

John builds a lot of apps with MongoDB, Express, Angular and Node (MEAN). MongoDB just works so well with these, but recently he has been using Cosmos DB on Azure in its place because it’s easy to use, scale, is super fast, and he does not have to change how he codes.

MEAN.js with Cosmos DB – Part 2: Creating the Node.js and Express App

Creating a Node.js and Express App along with the Angular CLI. Then create a web API endpoint and try it out.

MEAN.js with Cosmos DB – Part 3: Angular and Express APIs

The A in MEAN stands for Angular. This video shows how to build an Angular UI that talks to the Express API, with GET, POST, PUT, and DELETE.

MEAN.js with Cosmos DB – Part 4: Creating and Deploying Cosmos DB

Using the Azure CLI, to create the Cosmos DB account to represent a MongoDB model database and deploy it to Azure. Then view what we created in the Azure portal.

MEAN.js with Cosmos DB – Part 5: Querying Cosmos DB

How to connect to the MongoDB database with Azure Cosmos DB, using Mongoose, and query it for data.

You can subscribe to John’s YouTube series at https://www.youtube.com/playlist?list=PLbnXt_I6OfBWU9JiDNewZm11-7eFQf70M or follow him on twitter @John_Papa

RESTful interactions with Azure Cosmos DB resources using the DocumentDB API, CRUD operations, API for MongoDB and additional quick starts

Azure Cosmos DB is a globally distributed system that supports the document, graph, and key-value data models which Microsoft have classified as a multi-model database service for mission-critical systems.

It also supports both the API for MongoDB and the DocumentDB API for creating, querying, and managing resources.

If you would like to understand how to answer any of the following questions: –

- How do the standard HTTP methods work with Azure Cosmos DB resources?

- How do I create a new resource using POST?

- How do I register a stored procedure using POST?

- How does Azure Cosmos DB support concurrency control?

- What are the connectivity options for HTTPS and TCP?

Interaction model using the standard HTTP methods

Then take a look at Azure Cosmos DB REST API for full details first published on 18th July 2017 which covers these topics.

If interested in performing CRUD operations using REST, see Common tasks using the Azure Cosmos DB REST API.

If interested in performing CRUD operations using C# and REST, see the REST from .NET Sample on GitHub which can help you out.

If interested in more details of the MongoDB API, then see Introduction to Azure Cosmos DB: API for MongoDB which covers the benefits of using Azure Cosmos DB for MongoDB applications.

MongoDB wire protocol

… and finally if looking for help getting started then the following MongoDB quick starts will help you out: –

- Migrate an existing Node.js MongoDB web app.

- Build a MongoDB API web app with .NET and the Azure portal

- Build a MongoDB API console app with Java and the Azure portal

and also: –

- Connect to a MongoDB account covers how to get your account connection string information.

- Use MongoChef with Azure Cosmos DB tutorial covers how to create a connection between your Azure Cosmos DB database and MongoDB app in MongoChef.

- Migrate data to Azure Cosmos DB with protocol support for MongoDB tutorial covers importing your data to an API for MongoDB database.

- Robomongo covers connecting to the API for MongoDB account using .

- GetLastRequestStatistics command and the Azure portal metrics covers getting access to how many RUs your operations are using.

- … and configure read preferences for globally distributed apps.

Azure Cosmos DB with Scott Hanselman

Kirill Gavrylyuk stops by Azure Friday to talk Cosmos DB with Scott Hanselman.

Watch this quick overview of the industry’s first globally distributed multi-model database service followed by a demo of moving an existing MongoDB app to Cosmos DB with a single config change.

For more information, see: https://azure.microsoft.com/en-us/services/cosmos-db/

Querying Azure Cosmos DB resources using the REST API

Azure Cosmos DB is a globally distributed multi-model database with support for multiple APIs. This is a link to an article which describes how to use REST to query resources using the Azure Cosmos DB API – https://docs.microsoft.com/en-us/rest/api/documentdb/querying-documentdb-resources-using-the-rest-api

All Cosmos DB resources (with the exception of account resources) can be queried using Azure Cosmos DB SQL language. See Query with Azure Cosmos DB SQL for additional details on syntax – http://azure.microsoft.com/documentation/articles/documentdb-sql-query

For a full sample using .NET visit https://github.com/Azure/azure-documentdb-dotnet/tree/master/samples/rest-from-.net